Space & Physics

Why does superconductivity matter?

Superconductivity was discovered by H. Kamerlingh Onnes on April 8, 1911, who was studying the resistance of solid Mercury (Hg) at cryogenic temperatures. Liquid helium was recently discovered at that time. At T = 4.2K, the resistance of Hg disappeared abruptly. This marked a transition to a new phase that was never seen before. The state is resistanceless, strongly diamagnetic, and denotes a new state of matter. K. Onnes sent two reports to KNAW (the local journal of the Netherlands), where he preferred calling the zero-resistance state ‘superconductivity’’.

There was another discovery that went unnoticed in the same experiment, which was the transition of superfluid Helium (He) at 2.2K, the so-called λ transition, below which He becomes a superfluid. However, we shall skip that discussion for now. A couple of years later, superconductivity was found in lead (Pb) at 7K. Much later, in 1941, Niobium Nitride was found to superconduct below 16 K. The burning question in those days was: what would the conductivity or resistivity of metals be at a very low temperature?

The reason behind such a question is Lord Kelvin’s suggestion that for metals, initially the resistivity decreases with falling temperature and finally climbs to infinity at zero Kelvin because electrons’ mobility becomes zero at 0 K, yielding zero conductivity and hence infinite resistivity. Kamerlingh Onnes and his assistant Jacob Clay studied the resistance of gold (Au) and platinum (Pt) down to T = 14K. There was a linear decrease in resistance until 14 K; however, lower temperatures cannot be accessed owing to the unavailability of liquid He, which eventually happened in 1908.

In fact, the experiment with Au and Pt was repeated after 1908. For Pt, the resistivity became constant after 4.2K, while Au is found to superconduct at very low temperatures. Thus, Lord Kelvin’s notion about infinite resistivity at very low temperatures was incorrect. Onnes had found that at 3 K (below the transition), the normalised resistance is about 10−7. Above 4.2 K, the resistivity starts appearing again. The transition is too sharp and falls abruptly to zero within a temperature window of 10−4 K.

All superconductors are normal metals above the transition temperature. If we ask in the periodic table where most of the superconductors are located, the answer throws some surprises. The good metals are rarely superconducting

Perfect conductors, superconductors, and magnets

All superconductors are normal metals above the transition temperature. If we ask in the periodic table where most of the superconductors are located, the answer throws some surprises. The good metals are rarely superconducting. The examples are Ag, Au, Cu, Cs, etc., which have transition temperatures of the order of ∼ 0.1K, while the bad metals, such as niobium alloys, copper oxides, and 1 MgB2, have relatively larger transition temperatures. Thus, bad metals are, in general, good superconductors. An important quantity in this regard is the mean free path of the electrons. The mean free path is of the order of a few A0 for metals (above Tc), while for good metals (or the bad superconductors), it is usually a few hundred of A0. Whereas for the bad metals (good superconductors), it is still small as the electrons are strongly coupled to phonons. The orbital overlap is large in a superconductor. In good metals, the orbital overlap is small, and often they become good magnets. In the periodic table, transition elements such as the 3D series elements, namely Al, Bi, Cd, Ga, etc., become good superconductors, while Cr, Mn, and Fe are bad superconductors and in fact form good magnets. For all of them, that is, whether they are superconductors or magnets, there is a large density of states at the Fermi level. So, a lot of electronic states are necessary for the electrons in these systems to be able to condense into a superconducting state (or even a magnetic state). The nature of the electronic wave function determines whether they develop superconducting order or magnetic order. For example, electronic wavefunctions have a large spatial extent for superconductors, while they are short-range for magnets.

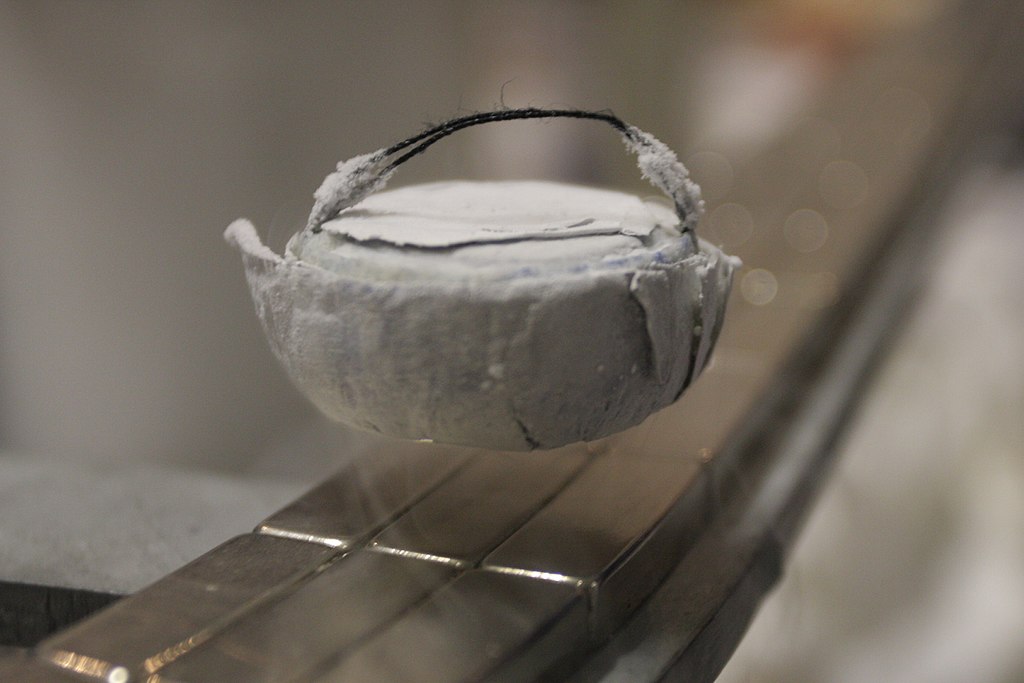

Meissner effect

The near-complete expulsion of the magnetic field from a superconducting specimen is called the Meissner effect. In the presence of a magnetic field, the current loops at the periphery will be generated so as to block the entry of the external field inside the specimen. If a magnetic field is allowed within a superconductor, then, by Ampere’s law, there will be normal current within the sample. However, there is no normal current inside the specimen. Thus, there can’t be any magnetic field. For this reason, superconductors are known as perfect diamagnets with very large diamagnetic susceptibility. Even the best-known diamagnets (which are non-superconductors) have magnetic susceptibilities of the order of 10−5. Thus, the diamagnetic property can be considered a distinct property of superconductors compared to zero electrical resistance.

The near-complete expulsion of the magnetic field from a superconducting specimen is called the Meissner effect

A typical experiment demonstrating the Meissner effect can be thought of as follows: Take a superconducting sample (T < Tc), sprinkle iron filings around the sample, and switch on the magnetic field. The iron filings are going to line up in concentric circles around the specimen. This implies the expulsion of the flux lines outside the sample, which makes the filings line up.

Distinction between perfect conductors and superconductors

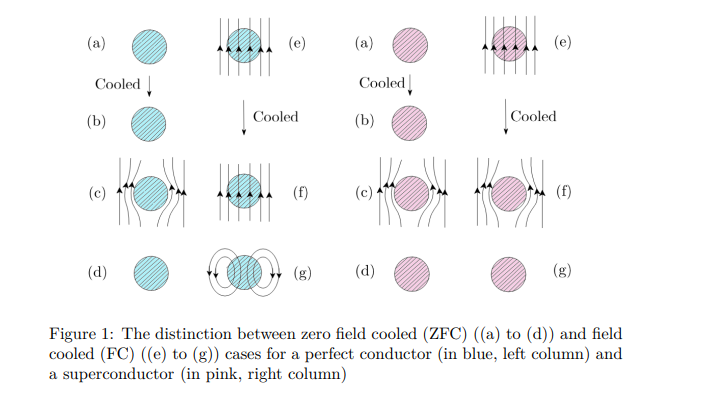

The distinction between a perfect conductor and a superconductor is brought about by magnetic field-cooled (FC) and zero-field-cooled (ZFc) cases, as shown below in Fig. 1.

In the absence of an external magnetic field, temperature is lowered for both the metal and the superconductor in their metallic states from T > Tc to T < Tc (see left panel for both in Fig. 1). Hence, a magnetic field is applied, which eventually gets expelled owing to the Meissner effect. The field has finally been withdrawn. However, if cooling is done in the presence of an external field, after the field is withdrawn, the flux lines get trapped for a perfect conductor; however, the superconductor is left with no memory of an applied field, a situation similar to what happens in the zero-field cooling case. So, superconductors have no memory, while perfect conductors have memory.

Microscopic considerations: BCS theory

The first microscopic theory of superconductivity was proposed by Berdeen, Cooper, and Schrieffer (BCS) in 1957, which earned them a Nobel Prize in 1972. The underlying assumption was that an attractive interaction between the electrons is possible, which is mediated via phonons. Thus, electrons form bound pairs under certain conditions, such as (i) two electrons in the vicinity of the filled Fermi Sea within an energy range ¯hωD (set by the phonons or lattice). (ii) The presence of phonons or the underlying lattice is confirmed by the isotope effect experiment, which confirms that the transition temperature is proportional to the mass of ions. Since the Debye frequency depends on the ionic mass, it implies that the lattice must be involved. 3 A small calculation yields that an attractive interaction is possible in a narrow range of energy. This attractive interaction causes the system to be unstable, and a long-range order develops via symmetry breaking. In a book by one of the discoverers, namely, Schrieffer, he described an analogy between a dancing floor comprising couples, dancing one with any other couple, and being completely oblivious to any other couple present in the room. The couples, while dancing, drift from one end of the room to another but do not collide with each other. This implies less dissipation in the transport of a superconductor. The BCS theory explained most of the features of the superconductors known at that time, such as (i) the discontinuity of the specific heat at the transition temperature, Tc. (ii) Involvement of the lattice via the isotope effect. (iii) Estimation of Tc and the energy gap. The value of Tc and the gap are confirmed by tunnelling experiments across metal-superconductor (M-S) or metal-insulator-superconductor (MIS) types of junctions. Giaever was awarded the Nobel Prize in 1973 for his work on these experiments. (iv) The Meissner effect can be explained within a linear response regime. (v) Temperature dependence of the energy gap, confirming gradual vanishing, which confirms a second-order phase transition. Most of the features of conventional superconductors can be explained using BCS theory. Another salient feature of the theory is that it is non-perturbative. There is no small parameter in the problem. The calculations were done with a variational theory where the energy is minimised with respect to some free parameters of the variational wavefunction, known as the BCS wavefunction.

Unconventional Superconductors: High-Tc Cuprates

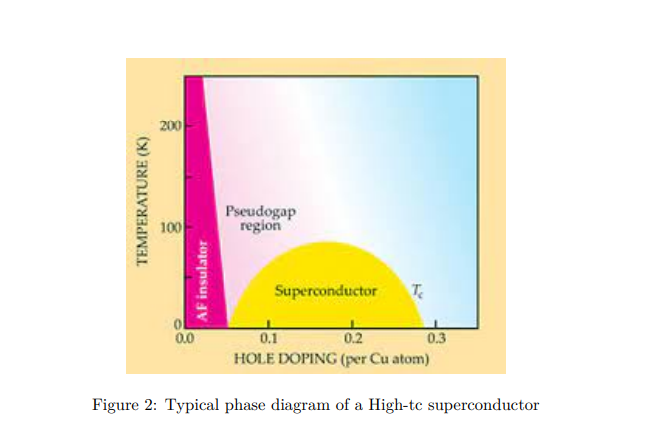

This is a class of superconductors where the two-dimensional copper oxide planes play the main role, and superconductivity occurs in these planes. Doping these planes with mobile carriers makes the system unstable towards superconducting correlations. At zero doping, the system is an antiferromagnetic insulator (see Fig. 2). With about 15% to 20% doping with foreign elements, such as strontium (Sr), etc. (for example, in La2−xSrxCuO4), the system turns superconductivity. There are two things that are surprising in this regard. (i) The proximity of the insulating state to the superconducting state; (ii) For the system initially in the superconducting state, as the temperature is raised, instead of going into a metallic state, it shows several unfamiliar features that are very unlike the known Fermi liquid characteristics. It is called a strange metal.

In fact, there are some signatures of pre-formed pairs in the ‘so-called’ metallic state, known as the pseudo gap phase. Since the starting point from which one should build a theory is missing, a complete understanding of the mechanism leading to the phenomenon cannot be understood. It remained a theoretical riddle.

Space & Physics

Engineers Develop Dual-Mode Propulsion System for Next-Generation Small Satellites

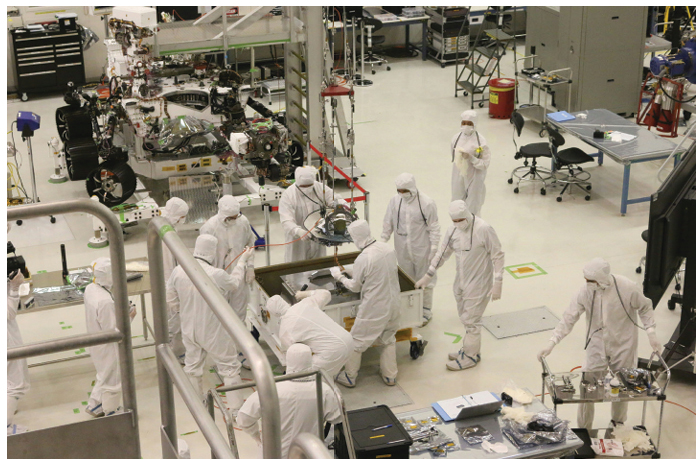

MIT engineers have developed a dual-mode propulsion system that combines chemical and electric thrusters, giving small satellites greater flexibility in space

Dual-mode propulsion system technology developed by MIT engineers could give small satellites the ability to perform both powerful manoeuvres and fuel-efficient long-distance travel using a single propellant source.

Small satellites have transformed space research by making missions cheaper and more accessible. Yet they continue to face a fundamental limitation: propulsion.

Traditional chemical thrusters provide powerful bursts of speed but consume large amounts of fuel. Electric propulsion systems, on the other hand, are highly efficient but generate only gentle thrust over long periods. Spacecraft designers have typically had to choose between the two.

Engineers at the Massachusetts Institute of Technology (MIT) now believe they have found a way to combine both approaches in a single compact system, potentially giving small satellites the agility of much larger spacecraft.

The breakthrough centres on a special propellant capable of powering both chemical and electric thrusters from the same fuel tank.

“If you can have chemical and electrical propulsion in one small package, it’s the best of both worlds,” said Amelia Bruno, lead author of the study and a former postdoctoral researcher in MIT’s Department of Aeronautics and Astronautics, in a media statement.

“This opens the door for small satellites to do even more science, more observations, and more interesting missions, all on a smaller and cheaper platform.”

The findings have been published in the Journal of Propulsion and Power.

Dual-Mode Propulsion System Combines Two Technologies

The MIT team tested a propellant known as Advanced SpaceCraft Energetic Non-Toxic propellant, or ASCENT. Originally developed by the U.S. Air Force as a safer alternative to hydrazine, ASCENT was designed for chemical propulsion systems.

Researchers discovered that the same propellant can also power miniature electric propulsion devices known as electrospray thrusters.

These tiny thrusters use electric fields to charge particles within a liquid propellant and eject them into space, creating precise and fuel-efficient thrust. While chemical thrusters are ideal for rapid manoeuvres, electrospray systems are better suited for gradual course corrections and long-duration journeys.

By enabling both systems to share a single fuel source, the technology could significantly reduce the size and complexity of propulsion systems aboard CubeSats and other small spacecraft.

Dual-Mode Propulsion System Could Expand Deep-Space Missions

Dual-mode propulsion system can expand deep-space missions. The implications extend beyond Earth orbit.

CubeSats have become popular for scientific research and technology demonstrations, but their limited propulsion capabilities have restricted their use in deep-space missions.

According to Paulo Lozano, the Miguel Alemán Velasco Professor of Aeronautics and Astronautics at MIT, the new system could change that.

“We could send CubeSats to Mars, or the asteroid belt, where they could make the journey slowly, using electrospray thrusters,” he said.

“You could then use your chemical thrusters to quickly move to look at interesting features. You could have a lot more flexibility to do a lot more things.”

Testing the Technology

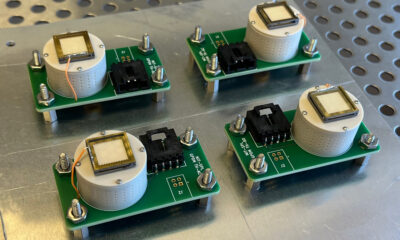

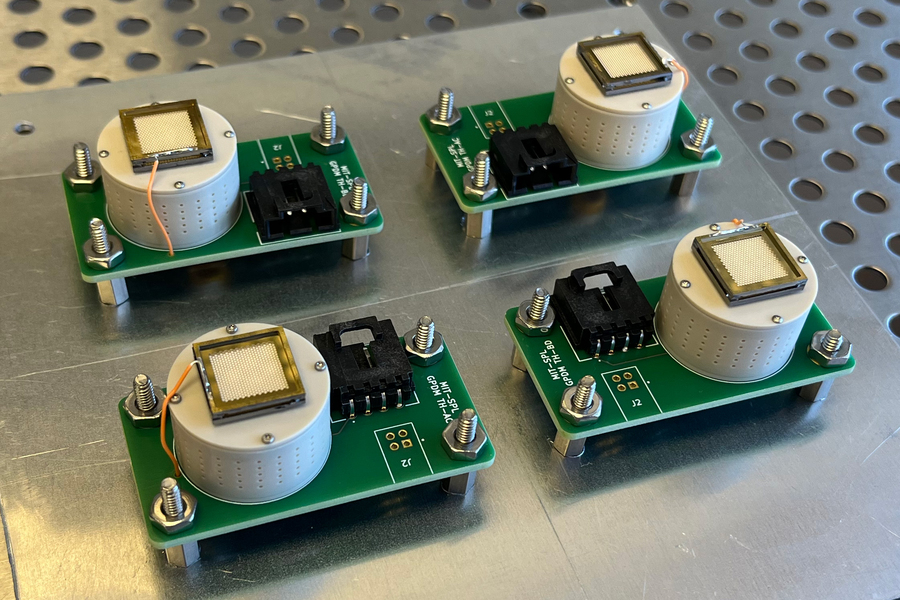

To evaluate the propellant’s performance, the researchers filled small CubeSat reservoirs with ASCENT and tested them in a vacuum chamber designed to simulate conditions in space.

During the experiments, electrospray thrusters powered by ASCENT successfully generated thrust for extended periods, in some cases operating continuously for up to 100 hours.

NASA Mission Will Put the Technology to the Test

The next major test will come later this year.

MIT researchers are working with NASA on the Green Propulsion Dual Mode mission, a CubeSat that will carry both chemical and electrospray thrusters powered by a single propellant tank. Scheduled for launch in November, the mission will be the first demonstration of such a system in a small spacecraft.

If successful, the mission could help pave the way for a new generation of versatile satellites capable of switching between rapid manoeuvres and highly efficient long-distance travel.

Space & Physics

India Semiconductor Mission: ‘It’s Not About Fabs. It’s About Building An Entire Ecosystem’

India Semiconductor Mission is reshaping the country’s chip ambitions. Neelkanth Mishra explains the opportunities, challenges and long-term strategy.

As India pushes ahead with its semiconductor ambitions under the India Semiconductor Mission (ISM), questions remain about where the country can realistically compete and how long it will take to build a viable ecosystem. In this exclusive conversation with Education Publica Editor Dipin Damodharan in Mumbai, Neelkanth Mishra, Chief Economist at Axis Bank and Head of Global Research at Axis Capital, draws on two decades of experience tracking the global semiconductor industry to explain India’s advantages, constraints, and long term trajectory. He is also a member of the advisory committee of the government’s India Semiconductor Mission and part-time Chairperson of the Unique Identification Authority of India (UIDAI). Edited excerpts.

How the India Semiconductor Mission Is Shaping the Industry

Let me start with asking something out of curiosity – how did you get interested in semiconductors in the first place?

When I joined Credit Suisse First Boston in 2003 in Singapore, the person who hired me was heading Asia technology research and was also the lead analyst for semiconductor foundries such as TSMC and UMC. I was hired to cover IT services, but he wanted help in building the semiconductor research franchise.

That led me to start reading about how chips are made. At that time, the industry was transitioning from 130-nanometer to 90-nanometer nodes, and copper was being introduced to replace aluminum due to resistance issues. There were challenges around yields because copper was seeping into substrates. I remember writing my first note around this issue after going through technical papers.

That note became quite popular, and it gave me the confidence to continue covering semiconductors. I spent a lot of time travelling to Taiwan, studying DRAM cycles, capex cycles, node transitions, and the broader global semiconductor ecosystem. Eventually, I moved to Taipei and began covering chip design companies such as MediaTek.

At that time, were you also tracking what was happening in India?

India has had chip design activity for a long time, even in the 1990s. Companies like Texas Instruments, Cadence, and Synopsys were recruiting from Indian campuses. Many engineers built long careers in these firms.

However, India did not have domestic chip manufacturing or strong Indian-owned chip design companies. By the mid-2000s, global firms such as Nvidia, Broadcom, and Intel began setting up design centres in India. So the design ecosystem was growing, but it was largely driven by global companies.

It is only in the last four to five years that more serious efforts have begun toward building Indian-owned capabilities.

So what changed in the last few years? Was it policy, or something else?

Policy has played a role. The Design Linked Incentive (DLI) scheme has been an important catalyst. We are seeing some early success. At the same time, there is also an evolutionary factor at play. Engineers who moved abroad 20–25 years ago are now at a stage where they have both the experience and financial capacity to take entrepreneurial risks. Many also want to return to India.

Another important factor is the growth of India’s electronics manufacturing ecosystem. As assembly volumes increase, there is greater awareness of what products need to be designed. Without that visibility into OEM pipelines, it is difficult to design chips.

Schemes like PLI for electronics manufacturing have helped build that awareness and ecosystem. As downstream industries grow, upstream opportunities in chip design also become clearer.

As US is good at designing chips, Taiwan and South Korea are good at manufacturing There’s always this question – should India focus on design, manufacturing, or packaging?

There is no either/or. India needs to participate across the value chain.

We already have a natural advantage in chip design, with about 20% of global design engineers based in India. Design is also less capital-intensive compared to manufacturing. In a $10 chip, $5–6 of value is captured by the designer, and in some cases even more.

At the same time, semiconductor manufacturing is a geopolitical necessity. It is not just a commercial issue but also a matter of national security. That is why governments provide significant subsidies for fabs.

However, manufacturing is a low-return business globally. Only a few companies like TSMC and Samsung have consistently generated returns above their cost of capital. Much of the value in the ecosystem is captured by design firms and by capital equipment suppliers, which operate in highly concentrated markets.

Therefore, India must build capabilities across the chain—from design to manufacturing to equipment and materials—if it wants meaningful value capture.

When we talk about building an ecosystem, how complex is that in reality?

It is extremely complex. The industry has multiple layers of specialization. For example, electronic design automation (EDA) tools are dominated by a few companies. Lithography, especially extreme ultraviolet, is controlled by a single company globally. Equipment for deposition, wafer slicing, and testing is also concentrated among a handful of firms.

Even the chemicals used in wafer cleaning are highly sophisticated and require extraordinary purity. A single wafer can take months to manufacture, involving hundreds of process steps.

So when we talk about semiconductors, it is not just about fabs. It is about building an entire ecosystem—equipment, materials, design, testing, and packaging. This is why it is a 15–20 year journey at least.

What about talent? Are we ready from a skills perspective?

In general, skilling in India is more of a demand problem than a supply problem. If there is sufficient demand, the industry tends to create the supply.

For example, there is already discussion about developing tens of thousands of chip testing engineers in India, and that is achievable. However, for cutting-edge technologies, there is a need for deeper investment in research.

As we move toward more advanced nodes—such as 7 to 12 nanometers—we will require significant high-end research capabilities. Countries like China took over 25 years to reach that level.

We need to invest not just in near-commercial research (TRL 6–9) but also in fundamental research (TRL 1–4), which creates long-term intellectual property. Government initiatives like the Anusandhan National Research Fund are steps in that direction, but overall R&D spending needs to increase.

What role should industry play in R&D?

Industry participation is essential. The government can catalyse investment, but companies will invest when they see potential returns.

We have seen this in pharmaceuticals, where Indian firms moved into R&D after reaching limits in generics. A similar shift can happen in semiconductors, but it will require scale, capital, and long-term commitment.

Where do startups fit into this picture?

Startups will have a significant role, particularly in chip design. Manufacturing is extremely capital-intensive, requiring billions of dollars in investment, which limits the role of startups.

However, in design and innovation, startups can play an important part. Many innovations in the semiconductor ecosystem originate from smaller firms, which are later acquired or integrated into larger companies.

To produce a globally competitive company, you need a large ecosystem of startups, experimentation, and risk-taking.

Coming to policy – what did India learn from ISM 1.0?

ISM 1.0 (India Semiconductor Mission) was a learning curve for everyone. It helped the government understand how to evaluate proposals, support companies, and manage operational challenges.

There were practical issues—from customs procedures affecting sensitive equipment to ensuring uninterrupted power supply. Semiconductor manufacturing requires extremely high reliability, and even a brief power outage can cause significant losses.

Another important learning is that the global industry is now more comfortable working with India. While India may not yet be the first choice, confidence has improved due to visible commitment and progress.

This increased comfort allows India to be more ambitious with ISM 2.0.

How important is policy stability?

Policy continuity is very important because these are long-term projects. Global firms value consistency in decision-making and relationships.

There is also a growing effort to ensure continuity in leadership within government institutions, which helps build expertise and trust over time.

Do we need a dedicated semiconductor research institution like IMEC?

There are existing efforts, such as the facility in Mohali, which supports defence-related applications. There are also discussions around creating IMEC-like research centres.

However, over time, the private sector will need to take a larger role in research. Government support is critical in the early stages, but for sustained innovation and competitiveness, industry-led initiatives are more effective. The government can act as the binding force or the catalyst that brings people to the table; however, I believe it is ultimately better if the private sector takes the lead. This creates a natural incentive for innovation and rigorous research. Beyond a certain point, government support becomes both fiscally unfeasible and operationally undesirable

If we look ahead 20 years, where do you see India?

On the design side, India can become much more significant. It is possible to see 10–15 large chip design companies and many smaller firms emerging.

On the manufacturing side, we could have several large fabs and potentially global players establishing operations in India, especially if a strong domestic design ecosystem develops.

For example, companies like TSMC tend to follow innovation ecosystems. If Indian design firms grow in scale and sophistication, it could attract global manufacturing investments.

Let me end with this – can India produce a company like Nvidia?

It is possible, but it requires a large ecosystem. Many Indians already occupy senior roles in global semiconductor companies and are involved in cutting-edge design work.

To create a company of that scale, you need risk capital, entrepreneurial ambition, and a large number of startups. In other markets, hundreds of firms compete, and one eventually emerges as a dominant player.

So it is not about a single effort—it is about building an ecosystem where many experiments take place, and success emerges from that.

Space & Physics

India’s First Private Theoretical Physics Institute Bets on Curiosity-Driven Research

India’s first private theoretical physics institute aims to strengthen fundamental research and scientific excellence through philanthropy

India’s first privately funded Theoretical Physics Institute signals a new approach to research

As India seeks to strengthen its scientific capabilities and emerge as a developed nation by 2047, a new initiative is testing whether private philanthropy can play a larger role in advancing fundamental science.

The Lodha Foundation has launched the Lodha Theoretical Physics Institute (LTPI), which it describes as India’s first fully privately funded institute dedicated exclusively to theoretical physics research. The institute aims to support long-term, curiosity-driven scientific inquiry by bringing together leading researchers from India and around the world.

Why a private theoretical physics institute matters

The launch comes at a time when discussions about India’s research ecosystem are increasingly focused on how the country can not only expand scientific output but also build globally competitive institutions capable of producing breakthrough discoveries.

At the heart of the new institute is a belief that transformative technological revolutions often originate from advances in basic science.

Theoretical physics may appear distant from everyday concerns, but history suggests otherwise. Quantum mechanics, once regarded as a highly abstract field, laid the foundation for semiconductors, lasers, modern electronics and many technologies that shape contemporary life. Similar advances in fundamental science continue to influence emerging areas such as quantum computing, advanced materials and next-generation communications.

The Lodha Foundation believes that supporting excellence in foundational research is critical for India’s long-term development.

“At the Lodha Foundation, we believe that pursuing excellence in everything we do is essential to creating the greatest possible impact. Whether it is identifying talented minds across the country and supporting them through transformative programmes, investing in urban sustainability solutions, or fostering innovation and research through institutions such as the Lodha Mathematical Sciences Institute and now the Lodha Theoretical Physics Institute, our goal is to contribute meaningfully to India’s journey towards becoming a developed nation,” said Abhishek Lodha, Trustee of the Lodha Foundation and Managing Director and CEO of Lodha Developers.

The institute will be led by Jainendra K. Jain, one of the world’s leading theoretical physicists and a recipient of the Wolf Prize in Physics. Jain’s pioneering work on composite fermions has significantly advanced the understanding of correlated quantum matter and continues to shape modern theoretical physics.

According to Jain, investment in theoretical physics is ultimately an investment in the future of science and technology.

“Theoretical physics lies at the heart of our understanding of nature. Advances in theoretical physics have historically shaped scientific thought and laid the foundation for transformative developments across multiple fields,” he said.

Theoretical Physics Institute and National Aspirations

Jain also linked the institute’s mission to India’s broader national aspirations.

“If India is to become a developed nation by 2047, it will need strong institutions backed by world-class scientific research infrastructure. In that context, LTPI is a significant step forward, as it is the country’s first fully privately funded institute dedicated to physics research,” he said.

Unlike many research initiatives that focus on short-term outcomes or commercial applications, LTPI intends to create an environment where scientists can pursue ambitious questions over extended periods of time. The institute plans to support focused research programmes, international conferences and collaborations among leading physicists.

Ashish Kumar Singh, Chief Mentor of the Lodha Foundation, said the vision is to create a space where scientific curiosity can thrive without unnecessary constraints.

“The idea is to bring together some of the brightest minds from around the world and give them the freedom to think deeply about physics without constraints. When exceptional minds come together, exceptional outcomes often follow. That is the bet we are making for India,” he said.

The launch also highlights a growing trend in scientific philanthropy. While private funding has long played a role in higher education and healthcare in India, dedicated philanthropic investment in fundamental scientific research has remained relatively limited. Institutions such as LTPI could signal a new model in which private donors complement public investments in building advanced research capacity.

To mark its launch, LTPI is hosting the 10th International Meeting on Emergent Phenomena in Quantum Hall Systems (EPQHS-10), bringing together leading researchers working on quantum matter and condensed matter physics. The event also featured a public lecture by Nobel laureate Klaus von Klitzing, whose discovery of the Quantum Hall Effect transformed precision measurement science.

Whether LTPI ultimately succeeds will depend on its ability to attract top scientific talent, produce influential research and establish itself as a globally respected centre for theoretical physics. But its creation raises a broader question for India’s scientific future: can philanthropy help build the institutions needed to support the next generation of fundamental discoveries?

The answer could have implications far beyond physics, shaping how India invests in knowledge creation and scientific excellence in the decades ahead.

-

Society5 months ago

Society5 months agoThe Ten-Rupee Doctor Who Sparked a Health Revolution in Kerala’s Tribal Highlands

-

Space & Physics6 days ago

Space & Physics6 days agoIndia Semiconductor Mission: ‘It’s Not About Fabs. It’s About Building An Entire Ecosystem’

-

Society5 months ago

Society5 months agoFrom Qubits to Folk Puppetry: India’s Biggest Quantum Science Communication Conclave Wraps Up in Ahmedabad

-

Space & Physics6 months ago

Space & Physics6 months agoIndian Physicists Win 2025 ICTP Prize for Breakthroughs in Quantum Many-Body Physics

-

Sustainable Energy6 months ago

Sustainable Energy6 months agoThe $76/MWh Breakthrough: Battery-Backed Solar Becomes the Cheapest Firm Power

-

Climate10 hours ago

Climate10 hours agoThe Climate World Cup? How Climate Change Could Affect Player Performance at the 2026 World Cup

-

Society5 months ago

Society5 months agoWhy the ‘Stanford Top 2% Scientists’ Label Is Widely Misrepresented

-

Space & Physics5 months ago

Space & Physics5 months agoWhen Quantum Rules Break: How Magnetism and Superconductivity May Finally Coexist