Health

Study Unveils Mucus Molecules That Block Salmonella and Prevent Diarrhea

A new MIT-led study reveals how key mucus molecules naturally shield the gut from dangerous bacteria like Salmonella

A new MIT-led study reveals how key mucus molecules naturally shield the gut from dangerous bacteria like Salmonella. The breakthrough opens new pathways for affordable, preventative treatments for travelers and soldiers at risk of infection.

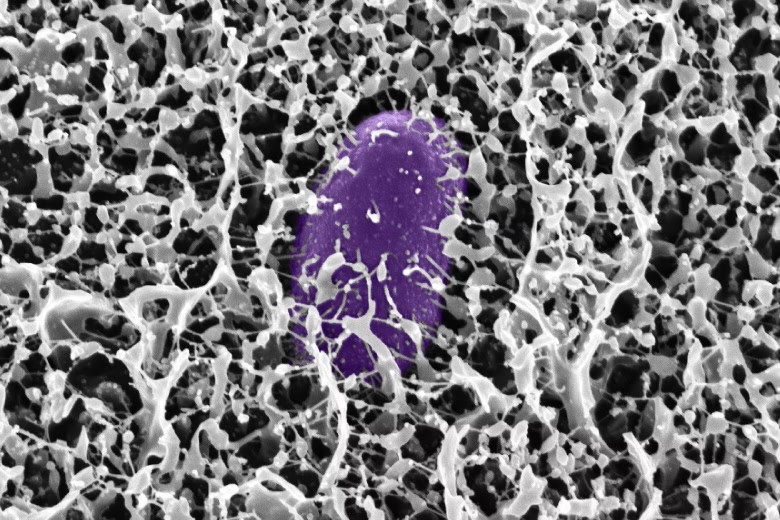

Researchers at MIT have discovered new powerful ways the body guards itself from dangerous bacteria: by deploying mucins, special molecules in mucus that neutralize microbes and stop infection before it starts. The team identified mucins—especially MUC2 and MUC5AC—in the digestive tract that shut down the genetic machinery Salmonella uses to invade cells and cause diarrhea.

“By using and reformatting this motif from the natural innate immune system, we hope to develop strategies to preventing diarrhea before it even starts. This approach could provide a low-cost solution to a major global health challenge that costs billions annually in lost productivity, health care expenses, and human suffering,” said Katharina Ribbeck, the Andrew and Erna Viterbi Professor of Biological Engineering at MIT, in a media statement.

In experiments, exposing Salmonella to the intestinal mucin MUC2 blocked the bacterial proteins that enable infection, turning off the critical regulator HilD. The study also found that a similar mucin from the stomach, MUC5AC, works the same way—and both molecules appear to protect against multiple foodborne germs triggered by similar genetic switches.

“We discovered that these mucins not only create a physical shield but also actively control whether pathogens can turn on genes needed for infection,” Ribbeck explained in the media statement.

Lead authors Kelsey Wheeler and Michaela Gold say synthetic versions of these mucins could soon be added to oral rehydration salts or chewable tablets, providing practical protection for troops, travelers, and people in high-risk areas. According to Wheeler, “Mucin mimics would particularly shine as preventatives, because that’s how the body evolved mucus — as part of this innate immune system to prevent infection,” she said.

Health

Lancet Commission Launched to Tackle Health and Justice Impacts of Rising Sea Levels

A new Lancet Commission will examine how rising sea levels impact health, equity, and global systems, with experts calling it an urgent crisis.

A new global commission led by The Lancet has been launched to examine the growing health and justice impacts of sea-level rise, as climate change accelerates risks for millions living in coastal and low-lying regions.

The Lancet Commission on Sea-Level Rise, Health and Justice, announced on April 8, brings together 26 international experts to assess how rising seas are reshaping public health, livelihoods, and global equity.

A Growing Crisis Beyond Climate

Sea-level rise, driven by anthropogenic climate change, is already contributing to displacement, food and water insecurity, and changing patterns of infectious diseases. The Commission marks the first major effort to analyse these intersecting risks through a health-focused lens.

“This commission comes at exactly the right time… sea-level rise is no longer a distant threat. It is already disrupting lives, health and wellbeing, especially for the most vulnerable,” said Christiana Figueres, Co-Chair of the Commission and a former UN climate chief.

Experts warn that the impacts extend far beyond environmental damage, affecting the social and economic fabric of vulnerable communities.

“Rising seas don’t just threaten coastlines, they threaten lives, livelihoods, and basic fairness. This is not only a climate problem. It is a health crisis, a justice crisis, and an urgent call for collective action,” said Jemilah Mahmood, Commissioner, Lancet Commission, and Executive Director of the Sunway Centre for Planetary Health, Malaysia.

An Urgent Global Health Challenge

The Commission is supported by the WHO Asia-Pacific Centre for Environment and Health and aims to generate evidence-based policy recommendations to strengthen adaptation, resilience, and equitable responses.

Dr Sandro Demaio, Director of WHO ACE, emphasised the immediacy of the crisis.

“Sea-level rise is no longer a distant threat — it is a public health emergency unfolding now. Through this WHO supported global Commission, we are clear: inaction is not neutral, it is a choice that puts lives and justice at risk.”

Human Impacts at the Core

The Commission also highlights the disproportionate burden on vulnerable populations, particularly in coastal and low-income regions.

“Rising sea levels are more than an environmental issue; they quietly contaminate water, displace communities, and increase health risks for those least able to cope. Every centimetre of sea level rise is not just a measure of water, but a measure of injustice,” said Kathryn Bowen, Co-Chair of the Commission.

A Defining Policy Moment

With projections suggesting that hundreds of millions of people could be displaced by the end of the century, the Commission aims to inform global policy and strengthen international cooperation.

“Sea-level rise is not just an environmental issue — it is a test of our commitment to people, equity, and future generations,” said Jiho Cha, Member of Parliament, Republic of Korea and Co-Chair of the Commission.

The Commission will contribute to global policy discussions, including international climate platforms, and aims to place human and planetary health at the centre of climate action.

Health

Researchers Develop AI Method That Makes Computer Vision Models More Explainable

A new technique developed by MIT researchers could help make artificial intelligence systems more accurate and transparent in high-stakes fields such as health care and autonomous driving by improving how computer vision models explain their decisions.

MIT researchers have developed a new explainable AI method that improves the accuracy and transparency of computer vision models, helping users trust AI predictions in healthcare and autonomous driving.

Researchers at MIT have developed a new approach to make computer vision models more transparent, offering a potential boost to trust and accountability in safety-critical applications such as medical diagnosis and autonomous driving.

In a media statement, the researchers said the method improves on a widely used explainability technique known as concept bottleneck modeling, which enables AI systems to show the human-understandable concepts behind a prediction. The new approach is designed to produce clearer explanations while also improving prediction accuracy.

Why explainable AI matters

In areas such as health care, users often need more than just a model’s output. They want to understand why a system arrived at a particular conclusion before deciding whether to rely on it. Concept bottleneck models attempt to address that need by forcing an AI system to make predictions through a set of intermediate concepts that humans can interpret.

For example, when analysing a medical image for melanoma, a clinician might define concepts such as “clustered brown dots” or “variegated pigmentation.” The model would first identify those concepts and then use them to arrive at its final prediction.

But the researchers said pre-defined concepts can sometimes be too broad, irrelevant or incomplete for a specific task, limiting both the quality of explanations and the model’s performance. To overcome that, the MIT team developed a method that extracts concepts the model has already learned during training and then compels it to use those concepts when making decisions.

The approach relies on two specialised machine-learning models. One extracts the most relevant internal features learned by the target model, while the other translates them into plain-language concepts that humans can understand. This makes it possible to convert a pretrained computer vision model into one capable of explaining its reasoning through interpretable concepts.

“In a sense, we want to be able to read the minds of these computer vision models. A concept bottleneck model is one way for users to tell what the model is thinking and why it made a certain prediction. Because our method uses better concepts, it can lead to higher accuracy and ultimately improve the accountability of black-box AI models,” Antonio De Santis, lead author of the study, said in a media statement.

De Santis is a graduate student at Polytechnic University of Milan and carried out the research while serving as a visiting graduate student at MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL). The paper was co-authored by Schrasing Tong, Marco Brambilla of Polytechnic University of Milan, and Lalana Kagal of CSAIL. The research will be presented at the International Conference on Learning Representations.

Concept bottleneck models have gained attention as a way to improve AI explainability by introducing an intermediate reasoning step between an input image and the final output. In one example, a bird-classification model might identify concepts such as “yellow legs” and “blue wings” before predicting a barn swallow.

However, the researchers noted that these concepts are often generated in advance by humans or large language models, which may not always match the needs of the task. Even when a model is given a fixed concept set, it can still rely on hidden information not visible to users, a challenge known as information leakage.

“These models are trained to maximize performance, so the model might secretly use concepts we are unaware of,” De Santis said in a media statement.

The team’s solution was to tap into the knowledge the model had already acquired from large volumes of training data. Using a sparse autoencoder, the method isolates the most relevant learned features and reconstructs them into a small number of concepts. A multimodal large language model then describes each concept in simple language and labels the training images by marking which concepts are present or absent.

The annotated dataset is then used to train a concept bottleneck module, which is inserted into the target model. This forces the model to make predictions using only the extracted concepts.

The researchers said one of the biggest challenges was ensuring that the automatically identified concepts were both accurate and understandable to humans. To reduce the risk of hidden reasoning, the model is limited to just five concepts for each prediction, encouraging it to focus only on the most relevant information and making the explanation easier to follow.

When tested against state-of-the-art concept bottleneck models on tasks including bird species classification and skin lesion identification, the new method delivered the highest accuracy while also producing more precise explanations, according to the researchers. It also generated concepts that were more relevant to the images in the dataset.

Still, the team acknowledged that the broader challenge of balancing accuracy and interpretability remains unresolved.

“We’ve shown that extracting concepts from the original model can outperform other CBMs, but there is still a tradeoff between interpretability and accuracy that needs to be addressed. Black-box models that are not interpretable still outperform ours,” De Santis said in a media statement.

Looking ahead, the researchers plan to explore ways to further reduce information leakage, possibly by adding additional concept bottleneck modules. They also aim to scale up the method by using a larger multimodal language model to annotate a larger training dataset, which could improve performance further.

This latest work adds to growing efforts to make AI systems not only more powerful, but also more understandable in domains where trust can be as important as accuracy.

Health

Why Planetary Health Is Failing —and How Smarter Communication Can Save It

Why Planetary Health Is Failing —and How Smarter Communication Can Save It

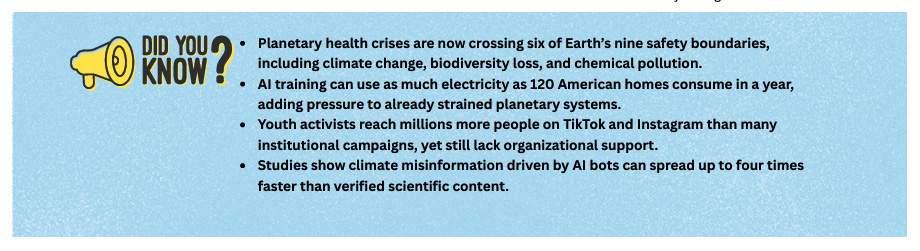

A major report, Voices for Planetary Health: Leveraging AI, Media and Stakeholder Strengths for Effective Narratives to Advance Planetary Health, produced by the Sunway Centre for Planetary Health at Sunway University and implemented by Internews, offers the first systematic mapping of how planetary health issues are communicated across the world. Its conclusion is clear: ineffective, fragmented communication is undermining humanity’s ability to respond to accelerating environmental and health crises. A Fractured Narrative The research team analysed 96 organizations and individuals across nine countries through interviews and social media mapping. What they found was striking. Despite decades of science showing the deep interconnections between climate change, pollution, biodiversity loss, and human health, global communication remains disjointed, inconsistent, and highly vulnerable to misinformation.

“We know the science. What we lack is a shared story that resonates across communities, cultures, and decision makers,” said Prof. Dr. Jemilah Mahmood, Executive Director of the Sunway Centre for Planetary Health. Most communication efforts are siloed—environment separate from health, climate from social justice, science from lived experience. The report notes that short-term projects, scarce resources, and discipline-bound narratives prevent the creation of powerful, sustained public messages capable of shifting policy or behaviour. AI: Powerful and Dangerous One of the study’s most urgent insights concerns artificial intelligence.

AI can dramatically expand communication capacity through multilingual translation, rapid content generation, and greater accessibility. But it also creates new risks that threaten planetary health messaging. Generative AI tools can be weaponized to fabricate climate falsehoods—from bot-driven denialist content to deepfake campaigns undermining activists. AI systems also reflect structural bias; research cited in the report shows that many models privilege Western epistemologies while marginalizing Indigenous and local knowledge, contributing to what scholars term “global conservation injustices.”

And AI’s own environmental footprint cannot be ignored. Data centres already consume about 1.5 percent of global electricity, with AI-specialized facilities drawing power comparable to aluminium smelters. Training advanced models such as GPT-4 requires three to five times more energy than GPT-3—an escalation that amplifies the very planetary pressures the field is trying to solve.

Communities Most at Risk Are the Least Heard The communication gap most severely harms those already disproportionately burdened by climate-related health threats. The report highlights how marginalized communities—including low-income groups, Indigenous peoples, and communities of colour—face higher exposure to extreme heat, flooding, respiratory illnesses, vector-borne diseases, and pollution-driven health impacts.

These same communities often lack access to reliable planetary health information. Complex scientific jargon, limited translation, and English-dependent messaging create substantial barriers, leaving many without the knowledge needed to advocate for or protect themselves.Multiple studies confirm that racially and socioeconomically marginalized communities in the United States experience greater impacts from climate related health events, including extreme heat, flooding, and respiratory illnesses. Children of colour are particularly vulnerable, experiencing disproportionate health impacts from climate exposures compared to white children. The communication barriers compound these vulnerabilities.

Scientific jargon makes planetary health concepts inaccessible to general audiences, while language delivery challenges—including complex English or lack of translation—further limit reach to non-English speaking communities. Yet young people emerge as a rare bright spot. The study finds that youth activists are using digital platforms— especially Instagram, TikTok, and community networks— to push for environmental accountability. But they still confront algorithmic bias, inconsistent platform moderation, and limited institutional support.

A Blueprint for Coherent, Inclusive Communication

To fix the communication failure, the report proposes a dual framework: strategic communication aimed at policy, and democratic communication rooted in community level dialogue. It outlines six guiding principles: centering marginalized voices; treating planetary health as one integrated story; connecting disciplines and geographies; anticipating backlash and protecting communicators; adapting messages to cultural context; and working with people’s existing mental models. “Communication is not just a tool; it is a catalyst for change.

By speaking with courage, coherence, and compassion, and equipping all actors to tell inclusive stories, we can turn knowledge into action and ensure no voice is left behind,” said Jayalakshmi Shreedhar of Internews. As political rollbacks weaken environmental safeguards and six of nine planetary boundaries are already breached, the stakes could not be higher. Science alone will not save us. A compelling, coherent planetary health narrative—shared across societies—just might

-

Society3 months ago

Society3 months agoThe Ten-Rupee Doctor Who Sparked a Health Revolution in Kerala’s Tribal Highlands

-

Society4 months ago

Society4 months agoFrom Qubits to Folk Puppetry: India’s Biggest Quantum Science Communication Conclave Wraps Up in Ahmedabad

-

COP305 months ago

COP305 months agoBrazil Cuts Emissions by 17% in 2024—Biggest Drop in 16 Years, Yet Paris Target Out of Reach

-

Earth5 months ago

Earth5 months agoData Becomes the New Oil: IEA Says AI Boom Driving Global Power Demand

-

COP305 months ago

COP305 months agoCorporate Capture: Fossil Fuel Lobbyists at COP30 Hit Record High, Outnumbering Delegates from Climate-Vulnerable Nations

-

Space & Physics4 months ago

Space & Physics4 months agoIndian Physicists Win 2025 ICTP Prize for Breakthroughs in Quantum Many-Body Physics

-

Health5 months ago

Health5 months agoAir Pollution Claimed 1.7 Million Indian Lives and 9.5% of GDP, Finds The Lancet

-

Sustainable Energy4 months ago

Sustainable Energy4 months agoThe $76/MWh Breakthrough: Battery-Backed Solar Becomes the Cheapest Firm Power