Space & Physics

A New Milestone in Quantum Error Correction

This achievement moves quantum computing closer to becoming a transformative tool for science and technology

Quantum computing promises to revolutionize fields like cryptography, drug discovery, and optimization, but it faces a major hurdle: qubits, the fundamental units of quantum computers, are incredibly fragile. They are highly sensitive to external disturbances, making today’s quantum computers too error-prone for practical use. To overcome this, researchers have turned to quantum error correction, a technique that aims to convert many imperfect physical qubits into a smaller number of more reliable logical qubits.

In the 1990s, researchers developed the theoretical foundations for quantum error correction, showing that multiple physical qubits could be combined to create a single, more stable logical qubit. These logical qubits would then perform calculations, essentially turning a system of faulty components into a functional quantum computer. Michael Newman, a researcher at Google Quantum AI, highlights that this approach is the only viable path toward building large-scale quantum computers.

However, the process of quantum error correction has its limits. If physical qubits have a high error rate, adding more qubits can make the situation worse rather than better. But if the error rate of physical qubits falls below a certain threshold, the balance shifts. Adding more qubits can significantly improve the error rate of the logical qubits.

A Breakthrough in Error Correction

In a paper published in Nature last December, Michael Newman and his team at Google Quantum AI have achieved a major breakthrough in quantum error correction. They demonstrated that by adding physical qubits to a system, the error rate of a logical qubit drops sharply. This finding shows that they’ve crossed the critical threshold where error correction becomes effective. The research marks a significant step forward, moving quantum computers closer to practical, large-scale applications.

The concept of error correction itself isn’t new — it is already used in classical computers. On traditional systems, information is stored as bits, which can be prone to errors. To prevent this, error-correcting codes replicate each bit, ensuring that errors can be corrected by a majority vote. However, in quantum systems, things are more complicated. Unlike classical bits, qubits can suffer from various types of errors, including decoherence and noise, and quantum computing operations themselves can introduce additional errors.

Moreover, unlike classical bits, measuring a qubit’s state directly disturbs it, making it much harder to identify and correct errors without compromising the computation. This makes quantum error correction particularly challenging.

The Quantum Threshold

Quantum error correction relies on the principle of redundancy. To protect quantum information, multiple physical qubits are used to form a logical qubit. However, this redundancy is only beneficial if the error rate is low enough. If the error rate of physical qubits is too high, adding more qubits can make the error correction process counterproductive.

Google’s recent achievement demonstrates that once the error rate of physical qubits drops below a specific threshold, adding more qubits improves the system’s resilience. This breakthrough brings researchers closer to achieving large-scale quantum computing systems capable of solving complex problems that classical computers cannot.

Moving Forward

While significant progress has been made, quantum computing still faces many engineering challenges. Quantum systems require extremely controlled environments, such as ultra-low temperatures, and the smallest disturbances can lead to errors. Despite these hurdles, Google’s breakthrough in quantum error correction is a major step toward realizing the full potential of quantum computing.

By improving error correction and ensuring that more reliable logical qubits are created, researchers are steadily paving the way for practical quantum computers. This achievement moves quantum computing closer to becoming a transformative tool for science and technology.

Space & Physics

The Universe Is Ringing

How gravitational waves from colliding black holes are opening an entirely new way of exploring the cosmos

More than a century after Albert Einstein predicted them, gravitational waves are transforming astronomy. Ripples in space-time produced by colliding black holes and neutron stars are now being detected routinely, revealing a universe filled with violent mergers and cosmic echoes that have travelled billions of years to reach Earth.

A Ripple Across the Cosmos

When the densest objects in the universe collide, the impact does not simply end with the destruction or merger of stars. It sends ripples through the very fabric of space and time.

These ripples—known as gravitational waves—spread outward at the speed of light, crossing galaxies and cosmic voids for millions or even billions of years. By the time they reach Earth, they are unimaginably faint distortions of space itself.

Yet scientists have learned how to detect them.

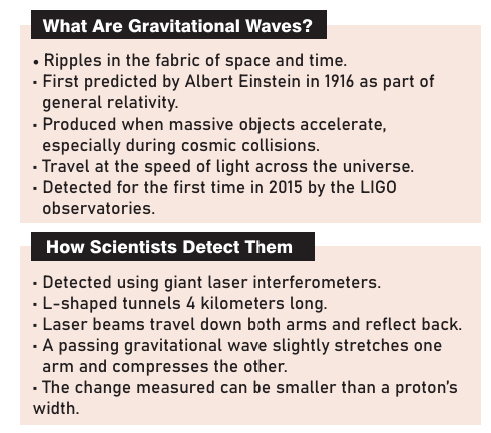

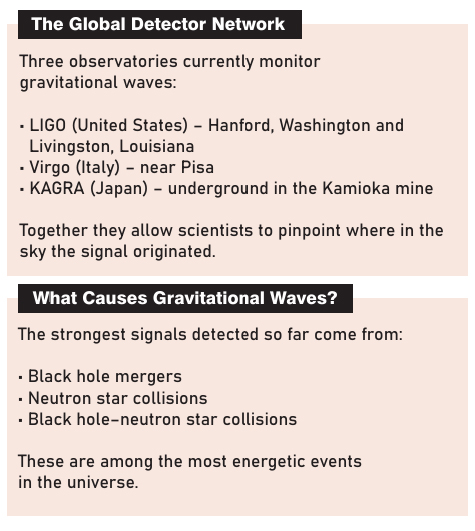

A global network of observatories now monitors these tiny disturbances: the Laser Interferometer Gravitational-Wave Observatory (LIGO) in the United States, the Virgo detector in Italy, and the Kamioka Gravitational Wave Detector (KAGRA) in Japan. Together, these instruments form one of the most sensitive scientific experiments ever constructed, capable of detecting distortions smaller than the width of a proton.

Through them, astronomers have begun to “listen” to the universe.

And what they are hearing is astonishing.

A Universe Filled with Collisions

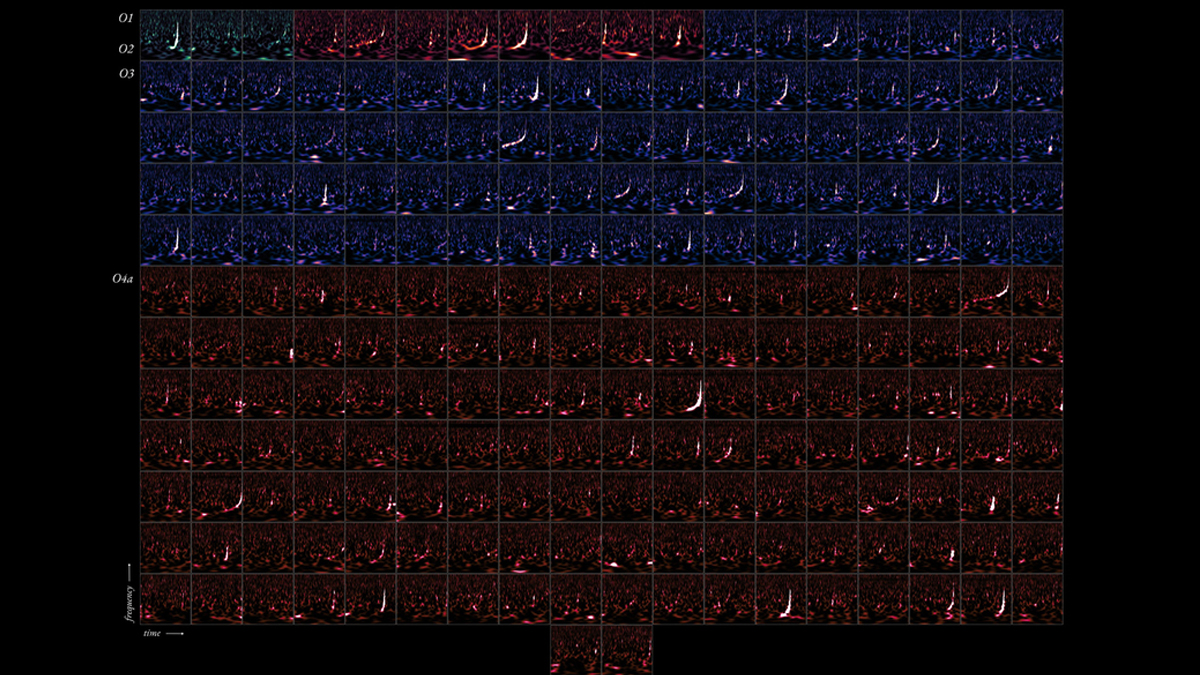

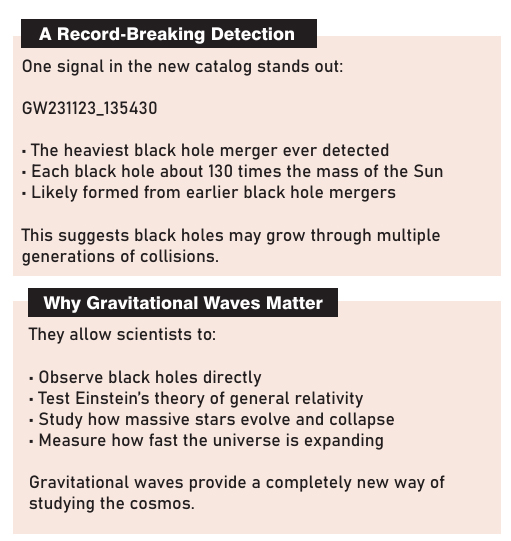

The LIGO–Virgo–KAGRA (LVK) Collaboration has now released the latest compilation of gravitational-wave detections, to appear in a special issue of Astrophysical Journal Letters. The findings suggest that the cosmos is reverberating with collisions far more frequently than scientists once imagined.

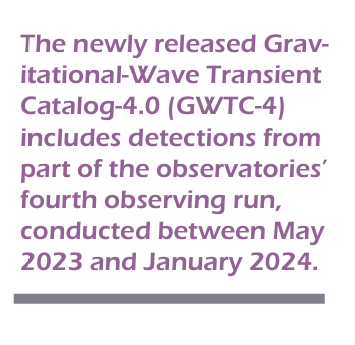

The newly released Gravitational-Wave Transient Catalog-4.0 (GWTC-4) includes detections from part of the observatories’ fourth observing run, conducted between May 2023 and January 2024.

In just nine months, the detectors recorded 128 new gravitational-wave candidates—signals that likely originated from extreme astrophysical events occurring hundreds of millions or billions of light-years away.

This newest batch more than doubles the size of the gravitational-wave catalog, which previously contained 90 candidates from earlier observing runs.

“The beautiful science that we are able to do with this catalog is enabled by significant improvements in the sensitivity of the gravitational-wave detectors as well as more powerful analysis techniques,” says Nergis Mavalvala, a member of the LVK collaboration and dean of the MIT School of Science.

What began in 2015 with the first historic detection has now become a steady stream of discoveries.

“In the past decade, gravitational wave astronomy has progressed from the first detection to the observation of hundreds of black hole mergers,” says Stephen Fairhurst, professor at Cardiff University and spokesperson for the LIGO Scientific Collaboration. “These observations enable us to better understand how black holes form from the collapse of massive stars, probe the cosmological evolution of the universe and provide increasingly rigorous confirmations of the theory of general relativity.”

When Black Holes Dance

Most gravitational waves detected so far originate from binary black holes—pairs of black holes locked in orbit around each other.

Over time, gravity draws them closer together. As they spiral inward, they release enormous amounts of energy in the form of gravitational waves. In the final fraction of a second, the two objects merge in a titanic collision, forming a single, larger black hole.

These cosmic dances are among the most energetic events in the universe.

Black holes themselves are born when massive stars collapse at the end of their lives, compressing enormous amounts of matter into regions so dense that not even light can escape.

Many form in pairs. When they eventually collide, the event sends gravitational waves surging through space.

The first such detection, announced in 2016, confirmed a century-old prediction of Einstein’s theory of general relativity. Since then, dozens—and now hundreds—of similar events have been observed.

But the latest catalog shows that the universe is far more diverse than scientists once believed.

Pushing the Edges of Black Hole Physics

The newly detected signals reveal a remarkable variety of cosmic systems.

Among them are the heaviest black hole binaries ever detected, systems where the masses of the two black holes are strikingly unequal, and pairs spinning at astonishing speeds.

“The message from this catalog is: We are expanding into new parts of what we call ‘parameter space’ and a whole new variety of black holes,” says Daniel Williams, a research fellow at the University of Glasgow. “We are really pushing the edges, and are seeing things that are more massive, spinning faster, and are more astrophysically interesting and unusual.”

One particularly dramatic signal—GW231123_135430—appears to have originated from two enormous black holes, each roughly 130 times the mass of the Sun. Most previously observed mergers involved black holes closer to 30 solar masses.

The extraordinary size of these objects suggests they may themselves have formed from earlier black hole mergers—a kind of cosmic generational chain.

Another remarkable event, GW231028_153006, revealed a binary in which both black holes are spinning at around 40 percent of the speed of light.

And in GW231118_005626, scientists detected an unusually uneven pair where one black hole is roughly twice as massive as the other.

“One of the striking things about our collection of black holes is their broad range of properties,” says Jack Heinzel, an MIT graduate student who contributed to the catalog’s analysis. “Some of them are over 100 times the mass of our sun, others are as small as only a few times the mass of the sun. Some black holes are rapidly spinning, others have no measurable spin.”

“We still don’t completely understand how black holes form in the universe,” he adds, “but our observations offer a crucial insight into these questions.”

Catching a Whisper in Space-Time

Detecting gravitational waves requires extraordinary precision.

The observatories use L-shaped interferometers with arms several kilometers long. Laser beams travel down these tunnels and reflect back to their source.

If a gravitational wave passes through the detector, it slightly stretches one arm while compressing the other, changing the distance the light travels by an incredibly tiny amount.

These changes can be smaller than one-thousandth the diameter of a proton.

Even with such advanced technology, detections remain unpredictable.

“You can’t ever predict when a gravitational wave is going to come into your detector,” says Amanda Baylor, a graduate student at the University of Wisconsin–Milwaukee who worked on the signal search. “We could have five detections in one day, or one detection every 20 days. The universe is just so random.”

Recent upgrades have dramatically improved the detectors’ reach. LIGO can now detect signals from neutron star collisions up to one billion light-years away, and black hole mergers far beyond that.

Testing Einstein’s Ultimate Theory

Gravitational waves are not only revealing spectacular cosmic events. They are also providing some of the most extreme tests ever conducted of Einstein’s theory of general relativity.

Black holes themselves are one of the most extraordinary predictions of the theory.

“Black holes are one of the most iconic and mind-bending predictions of general relativity,” says Aaron Zimmerman, associate professor of physics at the University of Texas at Austin.

When two black holes collide, he explains, they “shake up space and time more intensely than almost any other process we can imagine observing.”

One particularly powerful signal—GW230814_230901—allowed scientists to analyze the structure of the gravitational wave in exceptional detail.

“So far, the theory is passing all our tests,” Zimmerman says. “But we’re also learning that we have to make even more accurate predictions to keep up with all the data the universe is giving us.”

Measuring the Expansion of the Universe

Gravitational waves are also becoming powerful tools for answering one of cosmology’s biggest questions: how fast the universe is expanding.

Astronomers measure this expansion using the Hubble constant, but different methods have produced conflicting results.

Gravitational waves offer an independent approach.

“Merging black holes have a really unique property: We can tell how far away they are from Earth just from analyzing their signals,” says Rachel Gray, a lecturer at the University of Glasgow.

“So, every merging black hole gives us a measurement of the Hubble constant, and by combining all of the gravitational wave sources together, we can vastly improve how accurate this measurement is.”

Using the current gravitational-wave catalog, scientists estimate that the universe is expanding at roughly 76 kilometers per second per megaparsec.

For now, the uncertainty remains large—but future detections could sharpen the measurement significantly.

Listening to the Future

Only a decade ago, gravitational waves were purely theoretical signals.

Today, they are transforming astronomy.

With every new detection, scientists gain another glimpse into the hidden life of the universe: the birth of black holes, the evolution of galaxies, and the behavior of gravity under the most extreme conditions imaginable.

“Each new gravitational-wave detection allows us to unlock another piece of the universe’s puzzle in ways we couldn’t just a decade ago,” says Lucy Thomas, a postdoctoral researcher at the Caltech LIGO Lab.

“It’s incredibly exciting to think about what astrophysical mysteries and surprises we can uncover with future observing runs.”

The instruments on Earth are quiet, their lasers moving silently down vacuum tunnels. But far beyond our galaxy, black holes continue to collide.

And with each collision, the universe sends out another ripple—another echo across the cosmos—waiting for us to hear it.

Space & Physics

NASA’s Artemis II Captures Stunning ‘Earthset’ Over the Moon

NASA’s Artemis II crew captures a rare Earthset over the Moon, revealing lunar basins, craters, and Earth’s night-day divide.

NASA’s Artemis II mission has captured a striking new perspective of the Moon, showing Earth setting beyond the lunar horizon in a rare and visually dramatic moment from deep space.

The image, taken on April 6, 2026, at 6:41 p.m. EDT by the Artemis II crew during their journey around the far side of the Moon, reveals Earth partially dipping behind the Moon’s curved limb—an event often described as an “Earthset.”

A Geological Snapshot of the Moon

Beyond its visual impact, the image offers a detailed look at the Moon’s complex surface.

The Orientale basin, one of the Moon’s most prominent impact structures, is visible along the edge of the lunar surface. Nearby, the Hertzsprung Basin appears as faint concentric rings, partially disrupted by the younger Vavilov crater, which sits atop the older geological formation.

Also visible are chains of secondary craters—linear indentations formed by debris ejected during the massive impact that created the Orientale basin.

Artemis II: Earth in Shadow and Light

The photograph also captures Earth in a moment of contrast.

The darkened portion of the planet is in nighttime, while the illuminated side reveals swirling cloud formations over Australia and the Oceania region, offering a reminder of Earth’s dynamic atmosphere even from hundreds of thousands of kilometres away.

Artemis II: A New Era of Lunar Exploration

The Artemis II mission marks a major step in NASA’s return to the Moon, carrying astronauts on a crewed journey around the lunar surface for the first time in over five decades.

Images like this not only provide scientific insights into lunar geology but also offer a powerful visual connection between Earth and its nearest celestial neighbour—highlighting both the scale of space exploration and the fragility of our home planet.

Space & Physics

MIT Develops System to Boost Data Centre Efficiency by Up to 94%

MIT researchers develop Sandook, a system that boosts data centre efficiency by up to 94% without new hardware, improving SSD performance.

Researchers at the Massachusetts Institute of Technology (MIT) have developed a new system that significantly improves data centre efficiency by optimising the performance of storage devices, potentially reducing the need for additional hardware.

The system, called Sandook, addresses a persistent challenge in modern data centres—underutilisation of storage devices due to performance variability. By simultaneously tackling multiple sources of inefficiency, the approach delivers substantial performance gains compared to traditional methods.

Data Centre Efficiency: Addressing a Hidden Bottleneck

In data centres, multiple storage devices such as solid-state drives (SSDs) are often pooled together so that applications can share resources. However, differences in device performance mean that slower drives can limit overall system efficiency.

MIT researchers found that these inefficiencies stem from three key factors: hardware variability across devices, conflicts between read and write operations, and unpredictable slowdowns caused by internal processes like garbage collection.

To overcome this, the team developed Sandook, a software-based system designed to manage these issues in real time.

Two-Tier Intelligent Architecture

The system uses a two-tier architecture, combining a global controller that distributes tasks across devices with local controllers that react quickly to performance slowdowns.

This structure allows Sandook to dynamically balance workloads, rerouting tasks away from devices experiencing delays and optimising performance across the entire system.

The system also profiles the behaviour of individual SSDs, enabling it to anticipate slowdowns and adjust workloads accordingly.

Significant Performance Gains

When tested on real-world tasks such as database operations, AI model training, image compression, and data storage, Sandook demonstrated major improvements.

The system increased throughput by between 12 percent and 94 percent compared to conventional methods, while also improving overall storage utilisation by 23 percent. It enabled SSDs to achieve up to 95 percent of their theoretical maximum performance—without requiring specialised hardware.

A More Sustainable Approach

Researchers emphasised that improving efficiency is critical given the cost and environmental impact of data centre infrastructure.

“There is a tendency to want to throw more resources at a problem to solve it, but that is not sustainable in many ways. We want to be able to maximize the longevity of these very expensive and carbon-intensive resources,” said Gohar Chaudhry, lead author of the study, ina media statement.

“With our adaptive software solution, you can still squeeze a lot of performance out of your existing devices before you need to throw them away and buy new ones,” she added.

Unlocking Untapped Potential

The system also addresses the challenge of inconsistent device behaviour over time.

“I can’t assume all SSDs will behave identically through my entire deployment cycle. Even if I give them all the same workload, some of them will be stragglers, which hurts the net throughput I can achieve,” Chaudhry explained.

By continuously adjusting workloads, Sandook ensures that even underperforming devices contribute effectively without dragging down overall performance.

Researchers say the system could be further enhanced by integrating new storage technologies and adapting to predictable workloads such as artificial intelligence applications.

“Our dynamic solution can unlock more performance for all the SSDs and really push them to the limit. Every bit of capacity you can save really counts at this scale,” Chaudhry said.

Implications

As demand for data processing continues to surge, innovations like Sandook could play a critical role in making data centres more efficient, cost-effective, and environmentally sustainable—without requiring massive infrastructure expansion.

-

Society3 months ago

Society3 months agoThe Ten-Rupee Doctor Who Sparked a Health Revolution in Kerala’s Tribal Highlands

-

Society4 months ago

Society4 months agoFrom Qubits to Folk Puppetry: India’s Biggest Quantum Science Communication Conclave Wraps Up in Ahmedabad

-

COP305 months ago

COP305 months agoBrazil Cuts Emissions by 17% in 2024—Biggest Drop in 16 Years, Yet Paris Target Out of Reach

-

Earth5 months ago

Earth5 months agoData Becomes the New Oil: IEA Says AI Boom Driving Global Power Demand

-

COP305 months ago

COP305 months agoCorporate Capture: Fossil Fuel Lobbyists at COP30 Hit Record High, Outnumbering Delegates from Climate-Vulnerable Nations

-

Space & Physics4 months ago

Space & Physics4 months agoIndian Physicists Win 2025 ICTP Prize for Breakthroughs in Quantum Many-Body Physics

-

Health5 months ago

Health5 months agoAir Pollution Claimed 1.7 Million Indian Lives and 9.5% of GDP, Finds The Lancet

-

Sustainable Energy4 months ago

Sustainable Energy4 months agoThe $76/MWh Breakthrough: Battery-Backed Solar Becomes the Cheapest Firm Power